All of the software is installed and for the most part GNOME and avant-window-navigator are working as expected. The hardware is beefy and should support at least 200 users+, but as soon as get to about 20 users - things really start slowing. We are seeing problems with the networking and disk performance. They may be related, but I haven't yet been able to prove it.

We have cloned the server and moved it VMWare and it's suffering the same problems. So I feel pretty confident it's not hardware. We are using this hardware elsewhere with no problems.

Disk IO Problem

For the first time we tried to deploy ext4, all of my Googling seems to indicate no major problems and no complaints about performance. I did not see any "multi user" issues reported. If we have a few (10ish) people on the server things work just fine. Once you get more, the disk becomes VERY slow. If I vi /etc/hosts (shot below), it just sits for 3-4 seconds with a blinking cursor before the file appears.

With a user load, if I run the passwd command it sits for5 seconds after entering a password before it completes. On other servers even with hundreds of people, all of this happens instantly. if I run yast2 and install software with a load, the whole server becomes very slow and non responsive. On other releases of OpenSuse we have never seen this before.

While this might seem a clear IO problem, what also enters my head is that we are having some networking problems too; and I'm wondering if the NFS mounts are having problems which is affecting local disk IO. Unfortunately I cannot dismount the NFS mounts to prove that idea, too many necessary pieces are on remote file systems.

Networking

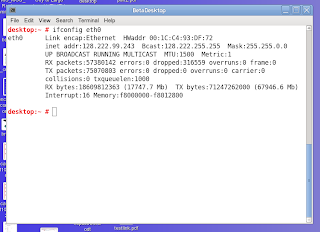

It seems like others are having OpenSuse 11.4 dropped RX woes too. The first shot below is the desktop server, and note the packets that are being dropped. This number climbs constantly every few seconds.

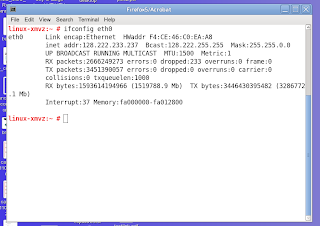

But note on this shot below from OpenSuse 11.3 how it *should* behave. This browser server has over 100 people using Firefox 5 and you can see it's being hammered on the network side and there are almost no dropped packets. This reflects how our other Linux servers work.

I have noticed that certain NFS activities really slow the machine, when they are in Nautilus and generating thumbnails there is a noticeable slowdown for the other users. But it's not clear if this is "networking" or "disk io"

What's Next?

Aside from someone out there having intimate knowledge of OpenSuse 11.4 and a quick fix, we'll begin making drastic changes when I returned. The first thing I'll do is grab the experimental 3.X kernel that I see on some of the OpenSuse channels. I have one inclination that we are having a kernel/scheduling problem because it's affecting two subsystems. Currently I have upgraded to the latest stable release of kernel-desktop-2.6.37.6-0.5.1.x86_64. If that doesn't work, the next thing that I'm going to do is reformat the server with ext3 and rule out a file system problem. This is a nasty thing, and going to require lots of redoing work that is already complete. But what we have now cannot be moved into production.

One other idea I had was that somehow the -desktop kernel is not suited for multiple users, and I could try moving to the more vanilla version; but it sure seems like this change won't make disk and networking better.

If you work on OpenSuse and have any information, it's greatly appreciated. We are anxious to move this server into production.

9 comments:

Hi Dave! It might be because (quite randomly) barriers are disabled by default on ext3 and enabled by default on ext4. Try mounting ext4 with nobarrier or barrier=0. Also, they threw out barriers in the 2.6.38 kernel if I remember and reworked it in another way...

@Ernst: That's is an awesome tip, and now reading about it I see it was merged in 2.6.30. Will try it!

Just be careful about barriers - if there's a crash/power outage/other, it's possible you could severely corrupt your filesystem. If you haven't already read this, check it out: http://blog.nirkabel.org/2008/12/07/ext3-write-barriers-and-write-caching/

@Jordan: We are looking at it now and will test it on our VM copy first. The way it is right now, it's just WAY too slow to deploy. We have gone to great lengths to ensure a steady flow of power to that room and will have to weight the risk.

Also if your system is based on fast RAID storage, you at least want to set slice_idle to zero or even better switch to the deadline I/O scheduler.

RH also seems to prefer these sysctl tunings for the CPU scheduler on servers:

kernel.sched_min_granularity_ns = 10000000

kernel.sched_wakeup_granularity_ns = 15000000

and they also change the default dirty ratio on fast storage:

vm.dirty_ratio = 40

vm.dirty_background_ratio = 15

Those dropped packets seem very high. For my own setup of one X-terminal box and one fast box, on both sides I have no dropped packets for

(fast box) RX bytes:6047520022 (5.6 GiB) TX bytes:26017678486 (24.2 GiB)

and

(X-terminal)RX bytes:1280000223 (1.1 GiB) TX bytes:4125983194 (3.8 GiB)

For both boxes I run kernel 2.6.32-5-amd64, and for that case I can report severe slowdowns after roughly a week of up time. The issue acts as if we are running out of memory on the fast box so that every application is being run constantly from disk rather than memory. However, "top" shows no memory issues so I suspect the kernel-2.6.32 scheduler is just getting completely borked after a while. A reboot solves the issue until it happens again in roughly another week of up time.

Both users on our system run a KDE4 desktop and use it constantly for daily work consisting of browsing the web for Linux news and Linux application development

I should have added that both our systems have Debian Squeeze installed, and we use the default Debian filesystem which is still ext3.

I had this problem a while ago, and it turned out to not be disk IO per se, but rather a NSS hammering the LDAP server - which did not have any caching setup - every time it did a directory listing.

I guess if you have hundreds of users in /etc/passwd & /etc/group, that could be slow as well.

Nice blog ppost

Post a Comment