In years past we always ran 100% of the software on the server and it's worked great. But in the last year we offloaded the RDP/Rdesktop client with success. The software to which we connect with RDP is obviously still host based, but now we run the client on the local workstation. In the case of RDP the users got a huge performance gain.

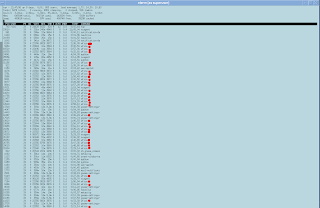

I'm reviewing all possible ideas for what should be offloaded to make use of distributed CPUs, while still maintaining our low costs of centralized servers. I have mentioned in the past that for sure we'll be doing the same thing with the ICA/Citrix client. The shot below was taken from our current GNOME server and you can see that wfica (the citrix client) chews CPU as the canvas is repainted. The server is certainly not taxed and we could get by running it in this manner; but I have a mind that tinkers and tunes and this really should be offloaded. Maybe a part of me wants to see 250 users running at 1% busy. :) For sure, it will increase the speed of the responsiveness and crispness of their UI interaction. RDP will communicate with the server instead of using X11 to deliver the presentation.

A few times a month, our users will stumble into a page that just does not play well over remote display. It's usually Flash and for some reason the player has problems and it just cannot keep up. I have always suspected that the video was encoded at a very high frame rate, but never have taken the time to verify that fact. As part of this whole process of reviewing offloading certain functions I have experimentally loaded Firefox 11 and Flash on the physical thin client. I wondered how it would work having access to the local video card.

I modified the master thin client to accept a request for starting a browser from the server side. Those with this new build can click on an icon on the GNOME server as picture below.

Firefox then runs locally. I didn't really know what to expect and the results were interesting. Firefox was slower in starting on the thin client than over the network from the big servers. I know that many people have complained about thin client speeds at other organizations; and I think this shows why. Software should be tested as both host based and local based and the right fit deployed. The local version of Firefox didn't have the crisp response time in the pulldown menus and UI interaction. It was certainly usable however. So the big test was then playing Flash content. My testing indicates that videos are not faster playing locally, and in fact might be a bit slower. In the shot below you can see "top" running on the thin client and Flash is just hammering the thin client. It's clear that Flash will consume as much CPU as it can get. I was testing on the older 5725 HP thin clients and once I pack up another beta build, I'll test it on the 5745s which I believe will provide a slightly better experience. But all in all I don't see any advantage to this design. Flash and Firefox constantly need upgrades and the devices would probably have to be upgraded every few weeks. I can upgrade the server in just minutes and it's deployed for all users; we'd need a strong case for offloading browsers to the local device and right now I'm not seeing it being worthwhile.

Current Projects: Installed and testing LibreOffice 3.5.2 and one by one crossing off items on my list for the next thin client release.

5 comments:

Could you please explain how you offload from a remote X session to the local browser?

Anonymous 12:22PM

http://www.ltsp.org/

It is surprisingly simple because it was designed in.

https://wiki.ubuntu.com/ltsp-localapps there are other instructions.

You are not really offloading from a remote X session.

ltsp off load to local provides the advantage of central management with local running. So avoiding the maintenance nightmare you are seeing Dave Richards.

I guess the idea of placing the thinboot image on a server did not cross your mind. Have them powered off at end of shift/day magic upgrade of them all.

The issue Dave Richards as just run into was kind predicted well handled if you think through the solution the right way. Its very simple to think it the wrong way.

This feature came out of the DRBL vs ltsp a long time ago. http://drbl.sourceforge.net/

drbl would beat ltsp hands down for particular things because back then thin was the only option on ltsp. Since then hybrid has been on offer.

Yes whatever is well behaved thin starts like a bat out of hell on a thin solution. Whatever is not well behaved disrupts the thin.

Most people don't think items like drbl are possible so when doing these solutions run into problems that are quite minor but look major.

Looking forward to your views on the LibreOffice upgrade from your perspective of supporting so many users.

thanks for the good posts.

I guess the confusion is that you have Debian running on the thin client, as well as some other Linux distro on the remote end. Any thin client I've used doesn't have anything "running" on it, other than some firmware.

"Thank you for your details interpretation about thin clients.

here I am introducing a Versatile thin client Terminal RDP XL-500

We can use it as a

1) high end thin client device

2) Mini PC/Individual PC

3) Virtualization Ready "

Post a Comment