"If I am on a computer by myself and sometimes it's barely adequate...how can it possibly work having hundreds of people sharing a server?"

We hear this over and over again as we talk to others and explain our technology. I think they assume that you divide the CPU speed by number of users and that somehow gives you your time slice.

In fact, once people see the speed of the software running at Largo, they are amazed and often say it runs faster than it does on their PCs. There are a few reasons for this:

* The servers are obviously bigger than any computer you would have at your desk.

* Even if you have hundreds of people on, at any given time only a fraction of that total is actually using the software.

* When you have enough memory to hold everyone in RAM, there is no swapping at all.

* You never really have a cold start of software. It's always running by someone else in the City.

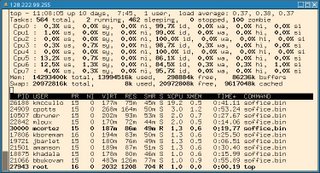

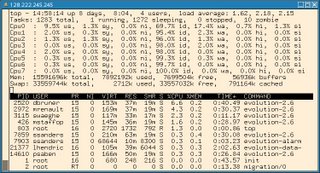

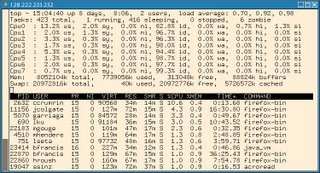

I thought I would show a few 'tops' to demonstrate how well Linux handles multiple users. I think we could easily get 2-3 times more people on these servers if we had to do so. The most interesting thing to note is the CPU usage.

This shot is OpenOffice 2.0.3, 85 concurrent users at the time.

This shot is Evolution 2.6, 180 concurrent users at the time:

This shot is Firefox 1.5, 48 concurrent users at the time:

11 comments:

Well, and that's despite Evolution & openoffice bloatage ;) It's "just" 85 users running openoffice, but there are almost 14 GB of memory used...

Actually, that 14GB is misleading. It's much lower on the first day after a reboot. The software that runs at night to backup the server uses up memory and seems to leak.

Thanks, Dave. I run our Linux terminal server (20 users and growing), so your blogs are always interesting.

14 GB is indeed misleading if you take it as the resources being used by OpenOffice. Look carefully at the buffers and cache numbers, more than 9 GB is used for file system metadata and data. This also explains why the number goes up on a backup, since the system stores everything in memory since it has no better use of it anyway.

Could you please fix the screenshots? I can't see OOo at all, and when I click on Evolution, it gives me a 404.

Regarding the memory usage, top includes the buffers/cache in the free amount. If you look at the cached amount, about 9GB is cached. So the real amount used is total_used - cached, or 13,994,516 - 9,617,048 = 4,377,468, or about 4GB in use. Use the command 'free' and look at the second line to see the totals with cache removed. Based on the memory and CPU usage, these boxes are spec'ed for peak loads, because you could probably get by with a 2 or 4-cpu box w/ 4GB of RAM with the load shown here and nobody would notice.

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

ox pass -

1by day -

18 virgin sex -

702 daisy -

1000 facials -

abigail 18 -

abrianna -

action girls -

adorable allie -

alexa model -

alexis virgin -

alicia rhodes -

all ashlee -

allison virgin -

all over lexi -

all ruth -

amateur facials -

amateur galore -

amazing ty -

amber kelly -

amy amy amy -

amy virgin -

angelina virgin -

anna 19 -

ann angel -

annas assets -

anna virgin -

ashlee and serena -

ashley brookes -

asian suck dolls -

ass like whoa -

ass terdam -

aubrey addams -

aunt judys -

bad tushy -

beautiful bri -

beckys love -

bella spice -

big blowjob chicks -

big older women -

big wet butts -

bikini voyeur -

blazing vids -

blonde passions -

brandy jacobs -

brandys box -

bratty brittany -

britney virgin -

brutal dildos -

bums in action -

caged tushy -

cam girls planet -

candi malone -

casey parker -

caseys cam -

cassandra calogera -

cassie leanne -

cheaper than agf -

cherry jul -

cherry spot -

claudia nichols -

club cherries -

college town cuties -

cory heart -

country cutie -

courtney virgin -

creampied sweeties -

creampie thais -

cum 101 -

cum academy -

cute anne -

cute chloe -

cute lisa -

cutie pie teens -

cynthia sin -

danni virgin -

danya licious -

ddf busty -

diddy and serena -

diddy licious -

digital angel girls -

dirty home vids -

doctor tushy -

dollie deathray -

doms delight -

do my wife slut -

dream of dani -

drilled mouths -

durty girls -

easy teen sluts -

elite movie pass -

ember 19 -

emily 18 -

emilys world -

ericas fantasies -

eva luv -

eva virgin -

exotic kisses -

explicit art -

far east desires -

fiona luv -

first gay orgy -

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

pimp my black teen -

1by day -

18 virgin sex -

702 daisy -

1000 facials -

abigail 18 -

abrianna -

action girls -

adorable allie -

alexa model -

alexis virgin -

alicia rhodes -

all ashlee -

allison virgin -

all over lexi -

all ruth -

amateur facials -

amateur galore -

amazing ty -

amber kelly -

amy amy amy -

amy virgin -

angelina virgin -

anna 19 -

ann angel -

annas assets -

anna virgin -

ashlee and serena -

ashley brookes -

asian suck dolls -

ass like whoa -

ass terdam -

aubrey addams -

aunt judys -

bad tushy -

beautiful bri -

beckys love -

bella spice -

big blowjob chicks -

big older women -

big wet butts -

bikini voyeur -

blazing vids -

blonde passions -

brandy jacobs -

brandys box -

bratty brittany -

britney virgin -

brutal dildos -

bums in action -

caged tushy -

cam girls planet -

candi malone -

casey parker -

caseys cam -

cassandra calogera -

cassie leanne -

cheaper than agf -

cherry jul -

cherry spot -

claudia nichols -

club cherries -

college town cuties -

cory heart -

country cutie -

courtney virgin -

creampied sweeties -

creampie thais -

cum 101 -

cum academy -

cute anne -

cute chloe -

cute lisa -

cutie pie teens -

cynthia sin -

danni virgin -

danya licious -

ddf busty -

diddy and serena -

diddy licious -

digital angel girls -

dirty home vids -

doctor tushy -

dollie deathray -

doms delight -

do my wife slut -

dream of dani -

drilled mouths -

durty girls -

easy teen sluts -

elite movie pass -

ember 19 -

emily 18 -

emilys world -

ericas fantasies -

eva luv -

eva virgin -

exotic kisses -

explicit art -

far east desires -

fiona luv -

first gay orgy -

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

please bang my wife -

1by day -

18 virgin sex -

702 daisy -

1000 facials -

abigail 18 -

abrianna -

action girls -

adorable allie -

alexa model -

alexis virgin -

alicia rhodes -

all ashlee -

allison virgin -

all over lexi -

all ruth -

amateur facials -

amateur galore -

amazing ty -

amber kelly -

amy amy amy -

amy virgin -

angelina virgin -

anna 19 -

ann angel -

annas assets -

anna virgin -

ashlee and serena -

ashley brookes -

asian suck dolls -

ass like whoa -

ass terdam -

aubrey addams -

aunt judys -

bad tushy -

beautiful bri -

beckys love -

bella spice -

big blowjob chicks -

big older women -

big wet butts -

bikini voyeur -

blazing vids -

blonde passions -

brandy jacobs -

brandys box -

bratty brittany -

britney virgin -

brutal dildos -

bums in action -

caged tushy -

cam girls planet -

candi malone -

casey parker -

caseys cam -

cassandra calogera -

cassie leanne -

cheaper than agf -

cherry jul -

cherry spot -

claudia nichols -

club cherries -

college town cuties -

cory heart -

country cutie -

courtney virgin -

creampied sweeties -

creampie thais -

cum 101 -

cum academy -

cute anne -

cute chloe -

cute lisa -

cutie pie teens -

cynthia sin -

danni virgin -

danya licious -

ddf busty -

diddy and serena -

diddy licious -

digital angel girls -

dirty home vids -

doctor tushy -

dollie deathray -

doms delight -

do my wife slut -

dream of dani -

drilled mouths -

durty girls -

easy teen sluts -

elite movie pass -

ember 19 -

emily 18 -

emilys world -

ericas fantasies -

eva luv -

eva virgin -

exotic kisses -

explicit art -

far east desires -

fiona luv -

first gay orgy -

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

reality pass plus -

1by day -

18 virgin sex -

702 daisy -

1000 facials -

abigail 18 -

abrianna -

action girls -

adorable allie -

alexa model -

alexis virgin -

alicia rhodes -

all ashlee -

allison virgin -

all over lexi -

all ruth -

amateur facials -

amateur galore -

amazing ty -

amber kelly -

amy amy amy -

amy virgin -

angelina virgin -

anna 19 -

ann angel -

annas assets -

anna virgin -

ashlee and serena -

ashley brookes -

asian suck dolls -

ass like whoa -

ass terdam -

aubrey addams -

aunt judys -

bad tushy -

beautiful bri -

beckys love -

bella spice -

big blowjob chicks -

big older women -

big wet butts -

bikini voyeur -

blazing vids -

blonde passions -

brandy jacobs -

brandys box -

bratty brittany -

britney virgin -

brutal dildos -

bums in action -

caged tushy -

cam girls planet -

candi malone -

casey parker -

caseys cam -

cassandra calogera -

cassie leanne -

cheaper than agf -

cherry jul -

cherry spot -

claudia nichols -

club cherries -

college town cuties -

cory heart -

country cutie -

courtney virgin -

creampied sweeties -

creampie thais -

cum 101 -

cum academy -

cute anne -

cute chloe -

cute lisa -

cutie pie teens -

cynthia sin -

danni virgin -

danya licious -

ddf busty -

diddy and serena -

diddy licious -

digital angel girls -

dirty home vids -

doctor tushy -

dollie deathray -

doms delight -

do my wife slut -

dream of dani -

drilled mouths -

durty girls -

easy teen sluts -

elite movie pass -

ember 19 -

emily 18 -

emilys world -

ericas fantasies -

eva luv -

eva virgin -

exotic kisses -

explicit art -

far east desires -

fiona luv -

first gay orgy -

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

sex and submission -

1by day -

18 virgin sex -

702 daisy -

1000 facials -

abigail 18 -

abrianna -

action girls -

adorable allie -

alexa model -

alexis virgin -

alicia rhodes -

all ashlee -

allison virgin -

all over lexi -

all ruth -

amateur facials -

amateur galore -

amazing ty -

amber kelly -

amy amy amy -

amy virgin -

angelina virgin -

anna 19 -

ann angel -

annas assets -

anna virgin -

ashlee and serena -

ashley brookes -

asian suck dolls -

ass like whoa -

ass terdam -

aubrey addams -

aunt judys -

bad tushy -

beautiful bri -

beckys love -

bella spice -

big blowjob chicks -

big older women -

big wet butts -

bikini voyeur -

blazing vids -

blonde passions -

brandy jacobs -

brandys box -

bratty brittany -

britney virgin -

brutal dildos -

bums in action -

caged tushy -

cam girls planet -

candi malone -

casey parker -

caseys cam -

cassandra calogera -

cassie leanne -

cheaper than agf -

cherry jul -

cherry spot -

claudia nichols -

club cherries -

college town cuties -

cory heart -

country cutie -

courtney virgin -

creampied sweeties -

creampie thais -

cum 101 -

cum academy -

cute anne -

cute chloe -

cute lisa -

cutie pie teens -

cynthia sin -

danni virgin -

danya licious -

ddf busty -

diddy and serena -

diddy licious -

digital angel girls -

dirty home vids -

doctor tushy -

dollie deathray -

doms delight -

do my wife slut -

dream of dani -

drilled mouths -

durty girls -

easy teen sluts -

elite movie pass -

ember 19 -

emily 18 -

emilys world -

ericas fantasies -

eva luv -

eva virgin -

exotic kisses -

explicit art -

far east desires -

fiona luv -

first gay orgy -

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

she got pimped -

1by day -

18 virgin sex -

702 daisy -

1000 facials -

abigail 18 -

abrianna -

action girls -

adorable allie -

alexa model -

alexis virgin -

alicia rhodes -

all ashlee -

allison virgin -

all over lexi -

all ruth -

amateur facials -

amateur galore -

amazing ty -

amber kelly -

amy amy amy -

amy virgin -

angelina virgin -

anna 19 -

ann angel -

annas assets -

anna virgin -

ashlee and serena -

ashley brookes -

asian suck dolls -

ass like whoa -

ass terdam -

aubrey addams -

aunt judys -

bad tushy -

beautiful bri -

beckys love -

bella spice -

big blowjob chicks -

big older women -

big wet butts -

bikini voyeur -

blazing vids -

blonde passions -

brandy jacobs -

brandys box -

bratty brittany -

britney virgin -

brutal dildos -

bums in action -

caged tushy -

cam girls planet -

candi malone -

casey parker -

caseys cam -

cassandra calogera -

cassie leanne -

cheaper than agf -

cherry jul -

cherry spot -

claudia nichols -

club cherries -

college town cuties -

cory heart -

country cutie -

courtney virgin -

creampied sweeties -

creampie thais -

cum 101 -

cum academy -

cute anne -

cute chloe -

cute lisa -

cutie pie teens -

cynthia sin -

danni virgin -

danya licious -

ddf busty -

diddy and serena -

diddy licious -

digital angel girls -

dirty home vids -

doctor tushy -

dollie deathray -

doms delight -

do my wife slut -

dream of dani -

drilled mouths -

durty girls -

easy teen sluts -

elite movie pass -

ember 19 -

emily 18 -

emilys world -

ericas fantasies -

eva luv -

eva virgin -

exotic kisses -

explicit art -

far east desires -

fiona luv -

first gay orgy -

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

squirt hunter -

1by day -

18 virgin sex -

702 daisy -

1000 facials -

abigail 18 -

abrianna -

action girls -

adorable allie -

alexa model -

alexis virgin -

alicia rhodes -

all ashlee -

allison virgin -

all over lexi -

all ruth -

amateur facials -

amateur galore -

amazing ty -

amber kelly -

amy amy amy -

amy virgin -

angelina virgin -

anna 19 -

ann angel -

annas assets -

anna virgin -

ashlee and serena -

ashley brookes -

asian suck dolls -

ass like whoa -

ass terdam -

aubrey addams -

aunt judys -

bad tushy -

beautiful bri -

beckys love -

bella spice -

big blowjob chicks -

big older women -

big wet butts -

bikini voyeur -

blazing vids -

blonde passions -

brandy jacobs -

brandys box -

bratty brittany -

britney virgin -

brutal dildos -

bums in action -

caged tushy -

cam girls planet -

candi malone -

casey parker -

caseys cam -

cassandra calogera -

cassie leanne -

cheaper than agf -

cherry jul -

cherry spot -

claudia nichols -

club cherries -

college town cuties -

cory heart -

country cutie -

courtney virgin -

creampied sweeties -

creampie thais -

cum 101 -

cum academy -

cute anne -

cute chloe -

cute lisa -

cutie pie teens -

cynthia sin -

danni virgin -

danya licious -

ddf busty -

diddy and serena -

diddy licious -

digital angel girls -

dirty home vids -

doctor tushy -

dollie deathray -

doms delight -

do my wife slut -

dream of dani -

drilled mouths -

durty girls -

easy teen sluts -

elite movie pass -

ember 19 -

emily 18 -

emilys world -

ericas fantasies -

eva luv -

eva virgin -

exotic kisses -

explicit art -

far east desires -

fiona luv -

first gay orgy -

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

teen hitchhikers -

1by day -

18 virgin sex -

702 daisy -

1000 facials -

abigail 18 -

abrianna -

action girls -

adorable allie -

alexa model -

alexis virgin -

alicia rhodes -

all ashlee -

allison virgin -

all over lexi -

all ruth -

amateur facials -

amateur galore -

amazing ty -

amber kelly -

amy amy amy -

amy virgin -

angelina virgin -

anna 19 -

ann angel -

annas assets -

anna virgin -

ashlee and serena -

ashley brookes -

asian suck dolls -

ass like whoa -

ass terdam -

aubrey addams -

aunt judys -

bad tushy -

beautiful bri -

beckys love -

bella spice -

big blowjob chicks -

big older women -

big wet butts -

bikini voyeur -

blazing vids -

blonde passions -

brandy jacobs -

brandys box -

bratty brittany -

britney virgin -

brutal dildos -

bums in action -

caged tushy -

cam girls planet -

candi malone -

casey parker -

caseys cam -

cassandra calogera -

cassie leanne -

cheaper than agf -

cherry jul -

cherry spot -

claudia nichols -

club cherries -

college town cuties -

cory heart -

country cutie -

courtney virgin -

creampied sweeties -

creampie thais -

cum 101 -

cum academy -

cute anne -

cute chloe -

cute lisa -

cutie pie teens -

cynthia sin -

danni virgin -

danya licious -

ddf busty -

diddy and serena -

diddy licious -

digital angel girls -

dirty home vids -

doctor tushy -

dollie deathray -

doms delight -

do my wife slut -

dream of dani -

drilled mouths -

durty girls -

easy teen sluts -

elite movie pass -

ember 19 -

emily 18 -

emilys world -

ericas fantasies -

eva luv -

eva virgin -

exotic kisses -

explicit art -

far east desires -

fiona luv -

first gay orgy -

fishnet models -

fruity babes -

fruity files -

fruity toys -

ftv girls -

full bush -

fun brunettes -

gia la shay -

girlfriend handjobs -

gotta love lucky -

guys caught wanking -

guys get fucked -

gypsy kay -

hailee jordan -

hands on hardcore -

hardcore saints -

hot braces -

hot legs and feet -

hot pool girls -

hot wife rio -

i cum alone -

i heart eden -

jacy andrews -

janet plays -

jen 18 -

jennas wish -

jenna virgin -

jenni carmichael -

jessica virgin -

jessie brooks -

jody love -

joi ryda -

just teen site -

kandis clubhouse -

karas handfull -

karen and amy -

karen dreams -

karen loves kate -

karen palms -

kari sweets -

karla spice -

kates playground -

kathy ash -

katrina 18 -

kaylas clubhouse -

kelly madison -

kendall blaze -

kimmie cream -

kira dreams -

kiss kristin -

kitty leigh -

kori kitten -

krissy love -

k scans -

kylies secret -

lainey moore -

lana brooke -

latin teen pass -

la zona modelos -

leia loves you -

lesbo mat -

lia 19 -

lick me girl -

little miss freckles -

lolly pop blowjobs -

love leialovely marilyn -

love your tits -

lunas cam -

luv alicia -

luv nikki -

maniac pass -

mariah spice -

marketa 4 you -

martina dreams -

matts models -

meat my ass -

megan michaels -

megan qt -

mega summers -

melissa midwest -

meredith 18 -

met art -

miami voyeur -

milfs on sticks -

molly meadows -

monster movie pass -

my pov bj -

my wife ashley -

nadia virgin -

naomi model -

nasty 19 -

natalie 18 -

natalie sparks -

naughty alysha -

naughty at home -

naughty belle -

naughty chrissy -

naughty dom -

naughty faith -

naughty girls zips -

naughty starri -

nebraska coeds -

next door nikki -

nina virgin -

no limit vids -

nubiles -

oc girls -

office gals -

older women -

only blowjob -

only tease -

only teen blowjobs -

open up girls -

pacinos world -

panty girl upskirts -

parkers panties -

paul markham teens -

peachez 18 -

phil flash -

pictures of dudes -

piper dreams -

play with paris -

plunder bunnies -

poolside giovanni -

poolside kelly -

porn fidelity -

porn flux -

princess blueyez -

pure dee -

rare asian -

rare asians -

real 8 teens -

real pantyhose teens -

rebecca love -

rock star pixies -

sapphic angels -

sara cutie -

sarah dreaming -

savanna virgin -

seanna teen -

selena luv -

selena spice -

serenity love -

sexxxy celeste -

sexy sindy -

sexy teen ella -

sexy teen sandy -

shabby virgins -

shana dreams -

shanes world -

share my cock -

sharing paris -

she got knocked up -

shelby virgin -

shy black teens -

sierra love -

simon scans -

sky modeling -

sleeping tushy -

slutty gal pals -

smokin hottie -

southern brooke -

southern kalee -

spice twins -

spoiled virgins -

spring break fantasy -

spunk bunnies -

spunky zips -

stacey online -

starla rocks -

stockings angels -

straight guys voyeur -

strapon lesbians -

strapon service -

stuffed petite -

stunning misty -

stunning serena -

suki model -

summer banks -

summer party time -

suzy stevens -

sweet adri -

sweet apples -

sweet danni -

sweet kacey -

sweet kara -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

sweet russian girls -

sweet vids -

tailynn -

taras cam -

taryn thomas -

taste of uk -

teenage depot -

teen charms -

teen kara -

teen stars magazine -

tere 19 -

throated -

tiffany teen -

tiny gwen -

total super cuties -

toy desire -

toy stuffers -

trailer park tiffany -

trinitys toybox -

trista stevens -

trixie storm -

tushy lickers -

tushy massage -

tushy school -

twinks -

uk porn sluts -

vicky vane -

victoria holyns -

victoria pink -

view pornstars -

vika dreams -

virtual bjs -

watch 4 beauty -

wet tshirt -

why be dressed -

wild young honeys -

wow wendy -

x crib -

x gape -

x lez -

x movies -

you love lucy -

young and banged -

young cum gulpers -

young models casting -

zexy teens -

teeny bopper club -

1by day -

702 daisy -

1000 facials -

abrianna -

all ashlee -

allison 19 -

all over lexi -

alyssa teen -

amateur galore -

amazing lisa -

amy amy amy -

anette dawn -

ann angel -

annas assets -

ashley brookes -

aunt judys -

bad tushy -

barely evil -

big older women -

big wet butts -

bikini voyeur -

blonde passions -

blue fantasies -

bondage and spanking -

boobs pl -

booty full babes -

brandys box -

bratty brittany -

brazzers pass -

brutal dildos -

busty z -

butts and blacks -

caged tushy -

cali logan -

cheerleader teasers -

cherry jul -

cum 101 -

cum academy -

cum faced asians -

cute chloe -

ddf busty -

diddy licious -

digital angel girls -

dirty lilly -

doctor adventures -

dotor tushy -

dp overload -

easy teen sluts -

emily 18 -

emma 18 -

emy 18 -

enslaved gals -

ericas fantasies -

ero model xxx -

erotic bpm -

explicit art -

fatal beauties -

fresh teen eroticaftv girls -

fun brunettes -

girlfriend handjobs -

goth chicks -

gothic sluts -

gotta love lucky -

guys get fucked -

hands on hardcore -

hardcore saints -

home made porn -

hot chicks big asses -

hot legs and feet -

hot wife rio -

i cum alone -

intermixed sluts -

irina sky -

jenni carmicheal -

jenny reid -

jessica precious -

judy star -

karas handfull -

karen dreams -

karen loves kate -

karissa booty -

karla spice -

kates playground -

katrina 18 -

kelly madison -

kelly q -

kendall blaze -

kira dreams -

kiss kristin -

kori kitten -

krissy love -

kylies secret -

lady boys and shemales -

lanny barbie -

la zona modelos -

lesbo mat -

lick me girl -

little liza -

little nadia -

love leia -

lovely anne -

lovely irene -

lovely tera -

love your tits -

lust for anal -

lusty busty chix -

maniac pass -

martina dreams -

matts models -

megan qt -

megan summers -

met art -

met models -

miss bunny -

my wife ashley -

nasty 19 -

naughty at home -

naughty belle -

naughty fun -

next door nancy -

next door nikki -

nicole graves -

nubiles -

oc girls -

older women -

only tease -

only teen blowjobs -

oral quickies -

pacinos world -

patricia petite -

paul markham teens -

peachez 18 -

phil flash -

planet corrina -

planet katie -

planet mandy -

planet nikki -

planet summer -

play with paris -

porn fidelity -

porn flux -

pregnant girlies -

pretty teen movies -

princess blueyez -

private cuties -

rare asian -

real 8 teens -

reality teens -

rebecca love -

rubber dollies -

sapphic angels -

scar 13 -

seanna teen -

sex city asia -

sex pro adventures -

sexy older moms -

sexy teen sandy -

share my cock -

she got knocked up -

shemales and transvestites -

simon scans -

sleeping tushy -

slippery sara -

smokin hottie -

southern brooke -

southern kalee -

squirting files -

strapon lesbians -

stuffed petite -

stunning serena -

summer banks -

sweetheart ashley -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

taryn thomas -

taste of uk -

teenage depot -

teen bitch club -

teen charms -

teen chloe -

teen flood -

teenie palace -

teen karma -

teen kayla -

teen keera -

teen labia -

teen larisa -

teens bj -

teen stars magazine -

tera 19 -

throated -

total super cuties -

toy desire -

tracy parks -

tranny chic -

tushy lickers -

tushy massage -

tushy school -

twinks -

us shemales -

view pornstars -

vika dreams -

vip xxx pass -

wild shemale -

x crib -

x gape -

x lez -

zeina heart -

zoey and friends -

the big swallow -

1by day -

702 daisy -

1000 facials -

abrianna -

all ashlee -

allison 19 -

all over lexi -

alyssa teen -

amateur galore -

amazing lisa -

amy amy amy -

anette dawn -

ann angel -

annas assets -

ashley brookes -

aunt judys -

bad tushy -

barely evil -

big older women -

big wet butts -

bikini voyeur -

blonde passions -

blue fantasies -

bondage and spanking -

boobs pl -

booty full babes -

brandys box -

bratty brittany -

brazzers pass -

brutal dildos -

busty z -

butts and blacks -

caged tushy -

cali logan -

cheerleader teasers -

cherry jul -

cum 101 -

cum academy -

cum faced asians -

cute chloe -

ddf busty -

diddy licious -

digital angel girls -

dirty lilly -

doctor adventures -

dotor tushy -

dp overload -

easy teen sluts -

emily 18 -

emma 18 -

emy 18 -

enslaved gals -

ericas fantasies -

ero model xxx -

erotic bpm -

explicit art -

fatal beauties -

fresh teen eroticaftv girls -

fun brunettes -

girlfriend handjobs -

goth chicks -

gothic sluts -

gotta love lucky -

guys get fucked -

hands on hardcore -

hardcore saints -

home made porn -

hot chicks big asses -

hot legs and feet -

hot wife rio -

i cum alone -

intermixed sluts -

irina sky -

jenni carmicheal -

jenny reid -

jessica precious -

judy star -

karas handfull -

karen dreams -

karen loves kate -

karissa booty -

karla spice -

kates playground -

katrina 18 -

kelly madison -

kelly q -

kendall blaze -

kira dreams -

kiss kristin -

kori kitten -

krissy love -

kylies secret -

lady boys and shemales -

lanny barbie -

la zona modelos -

lesbo mat -

lick me girl -

little liza -

little nadia -

love leia -

lovely anne -

lovely irene -

lovely tera -

love your tits -

lust for anal -

lusty busty chix -

maniac pass -

martina dreams -

matts models -

megan qt -

megan summers -

met art -

met models -

miss bunny -

my wife ashley -

nasty 19 -

naughty at home -

naughty belle -

naughty fun -

next door nancy -

next door nikki -

nicole graves -

nubiles -

oc girls -

older women -

only tease -

only teen blowjobs -

oral quickies -

pacinos world -

patricia petite -

paul markham teens -

peachez 18 -

phil flash -

planet corrina -

planet katie -

planet mandy -

planet nikki -

planet summer -

play with paris -

porn fidelity -

porn flux -

pregnant girlies -

pretty teen movies -

princess blueyez -

private cuties -

rare asian -

real 8 teens -

reality teens -

rebecca love -

rubber dollies -

sapphic angels -

scar 13 -

seanna teen -

sex city asia -

sex pro adventures -

sexy older moms -

sexy teen sandy -

share my cock -

she got knocked up -

shemales and transvestites -

simon scans -

sleeping tushy -

slippery sara -

smokin hottie -

southern brooke -

southern kalee -

squirting files -

strapon lesbians -

stuffed petite -

stunning serena -

summer banks -

sweetheart ashley -

sweet krissy -

sweet leah luv -

sweet models -

sweet n soft -

taryn thomas -

taste of uk -

teenage depot -

teen bitch club -

teen charms -

teen chloe -

teen flood -

teenie palace -

teen karma -

teen kayla -

teen keera -

teen labia -

teen larisa -

teens bj -

teen stars magazine -

tera 19 -

throated -

total super cuties -

toy desire -

tracy parks -

tranny chic -

tushy lickers -

tushy massage -

tushy school -

twinks -

us shemales -

view pornstars -

vika dreams -

vip xxx pass -

wild shemale -

x crib -

x gape -

x lez -

zeina heart -

zoey and friends -

tug jobs -

1by day -

702 daisy -

1000 facials -

abrianna -

all ashlee -

allison 19 -

all over lexi -

alyssa teen -

amateur galore -

amazing lisa -

amy amy amy -

anette dawn -

ann angel -

annas assets -

ashley brookes -

aunt judys -

bad tushy -

barely evil -

big older women -

big wet butts -

bikini voyeur -

blonde passions -

blue fantasies -

bondage and spanking -

boobs pl -

booty full babes -

brandys box -

bratty brittany -

brazzers pass -

brutal dildos -

busty z -

butts and blacks -

caged tushy -

cali logan -

cheerleader teasers -

cherry jul -

cum 101 -

cum academy -

cum faced asians -

cute chloe -

ddf busty -

diddy licious -

digital angel girls -

dirty lilly -

doctor adventures -

dotor tushy -

dp overload -

easy teen sluts -

emily 18 -

emma 18 -

emy 18 -

enslaved gals -

ericas fantasies -

ero model xxx -

erotic bpm -

explicit art -

fatal beauties -

fresh teen eroticaftv girls -

fun brunettes -

girlfriend handjobs -

goth chicks -

gothic sluts -

gotta love lucky -

guys get fucked -

hands on hardcore -

hardcore saints -

home made porn -

hot chicks big asses -

hot legs and feet -

hot wife rio -

i cum alone -

intermixed sluts -

irina sky -

jenni carmicheal -

jenny reid -

jessica precious -

judy star -

karas handfull -

karen dreams -

karen loves kate -

karissa booty -

karla spice -

kates playground -

katrina 18 -

kelly madison -

kelly q -

kendall blaze -

kira dreams -

kiss kristin -

kori kitten -

krissy love -

kylies secret -

lady boys and shemales -

lanny barbie -